The End of Manual Debugging: Advanced Strategies for Resolving Spreadsheet Formula Errors

On this page

- The Anatomy of a Formula Failure

- Why Deep Nesting is an Architectural Failure

- Identifying Syntax Errors Versus Logical Flaws

- Addressing the Infamous Object Object Syndrome

- Deconstructing the Nested IF Nightmare

- Introducing Custom Variables and Modern Functions

- Adopting IDE-Like Environments for Spreadsheets

- Advanced Architectural Strategies for Data Professionals

- Step by Step Guide to Refactoring Legacy Formulas

- Building Defensive Formulas for Long Term Stability

- Conclusion: Elevating Your Data Architecture Logic

For decades, data professionals and financial analysts have been forced to accept a fundamentally flawed working environment. The traditional formula bar, with its limited visibility and lack of structural formatting, is a relic of the past that severely hinders productivity. Attempting to parse complex logical statements within a single unformatted line of text is a recipe for cognitive overload and inevitable mistakes. It is time to completely dismantle our reliance on manual debugging.

The true cost of manual troubleshooting extends far beyond mere frustration. Every minute spent tracing a missing parenthesis or deciphering an undocumented legacy formula is a minute stolen from strategic data analysis. When your financial models dictate critical business decisions, relying on rudimentary error checking introduces unacceptable levels of risk. We must move past trial and error methodologies and embrace a more systematic, architectural approach to spreadsheet logic.

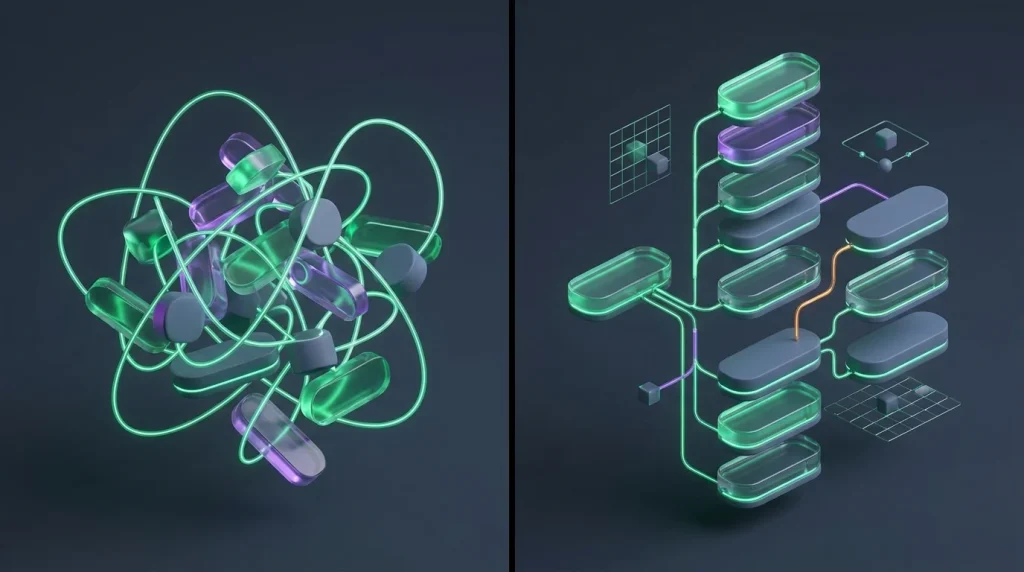

This campaign to elevate your spreadsheet experience begins by exposing the inherent weaknesses of native formula editors. We will explore why relying on deep nesting is a symptom of poor data architecture rather than a display of technical prowess. By adopting modern techniques, such as custom variables and integrated development environment methodologies, you can permanently eradicate syntax hell and drastically reduce your troubleshooting turnaround time.

The Anatomy of a Formula Failure

Understanding why traditional troubleshooting fails requires an honest examination of the tools we use daily. Native formula bars were designed for basic arithmetic, not enterprise grade financial modeling. When you attempt to build intricate data pipelines within an interface designed for simple summation, structural failure is guaranteed. The environment actively works against the user by stripping away crucial context and visual hierarchy.

Furthermore, the native environment fails to differentiate between structural formatting and actual mathematical logic. Because everything is compressed into a continuous alphanumeric string, the human brain is forced to act as a compiler. You are mentally mapping dependencies, tracking logical branches, and counting parentheses simultaneously. This multitasking burns mental energy at an alarming rate and directly contributes to late afternoon transposition errors.

Why Deep Nesting is an Architectural Failure

There is perhaps no greater symbol of manual debugging misery than the deeply nested conditional statement. For years, spreadsheet practitioners believed that stacking countless conditions within one another was a demonstration of advanced skill. In reality, it is a catastrophic architectural failure. Every additional layer of nesting exponentially increases cognitive load and obscures the fundamental business logic the model is supposed to execute.

- Deep nesting exponentially increases the risk of logical transposition errors due to sheer textual density.

- Native editors provide absolutely zero visual hierarchy to distinguish between separate conditional pathways.

- Subsequent analysts inherit an unreadable text block that requires hours of tedious reverse engineering to decipher.

- Calculation performance degrades significantly as the application evaluates unnecessary overlapping logical branches.

When a nested statement inevitably fails, the native editor provides absolutely no context regarding which specific branch triggered the error. Analysts are forced to manually highlight segments of the text and force calculations to identify the breakdown point. This manual tracing is precisely the type of low value task that advanced troubleshooting methodologies seek to eliminate. We must replace deep nesting with scalable, transparent alternatives.

Identifying Syntax Errors Versus Logical Flaws

Mastering advanced debugging requires understanding the fundamental difference between syntax anomalies and logical breakdowns. Syntax errors are structural violations of the spreadsheet language, such as omitting a required comma or failing to close a text string. Because the native environment lacks real time validation, these mistakes remain hidden until execution, resulting in cryptic parse codes that provide minimal diagnostic value to the end user.

Conversely, logical flaws are silent and infinitely more dangerous. A logically flawed formula successfully computes and returns a value, but that value is fundamentally incorrect due to flawed reasoning or incorrect assumptions. Detecting these errors requires a comprehensive understanding of both the data context and the mathematical operations being applied. Manual debugging is entirely insufficient for exposing complex logical inconsistencies within massive datasets.

Addressing the Infamous Object Object Syndrome

As spreadsheets increasingly intersect with external scripts and application programming interfaces, new categories of complex errors have emerged. A classic example is the infamous object object syndrome, which occurs when a script attempts to return a complex data structure to a cell instead of a primitive value. Instead of displaying the anticipated revenue figures, the cell cryptically displays a string representation of a generic javascript object.

This specific error exposes a fundamental disconnect between modern data pipelines and traditional spreadsheet environments. Resolving an object object output requires moving beyond basic spreadsheet syntax and implementing robust data parsing within the connective script. Data professionals must ensure that strict type checking is enforced and that only flattened arrays or single distinct values are successfully passed back to the native spreadsheet grid.

Deconstructing the Nested IF Nightmare

To truly dismantle traditional debugging, we must address the root cause of most syntax errors. By intentionally avoiding deep conditional branching, you instantly eliminate the majority of missing parenthesis and argument mismatch errors. The solution lies in extracting hardcoded logic from the formula bar and placing it into structured, external reference grids. This strategy is known as logic uncoupling.

Utilizing a dedicated configuration sheet to house lookup tables transforms rigid conditions into dynamic, manageable data points. Instead of burying discount thresholds or categorizations within a dense text string, you place them in a highly visible structured grid. Your calculation cell then utilizes a modern index or lookup function to retrieve the correct value based on the input parameters. This separation is paramount.

Transitioning to switch logic or boolean math also offers a powerful alternative to traditional nested statements. Switch functions allow you to evaluate a single expression against a list of matching values, returning the corresponding result without requiring recursive logical evaluations. Similarly, multiplying boolean arrays can process complex multiple criteria scenarios in a fraction of the time, bypassing conditional logic entirely and drastically reducing error rates.

Introducing Custom Variables and Modern Functions

Once you escape the constraints of the traditional formula bar, you can leverage advanced native features to define custom variables. Modern functions allow you to assign a highly descriptive name to a specific calculation and reuse that exact name throughout your logical statement. Defining custom variables virtually eliminates the need to repeatedly execute volatile calculations within the same analytical cell.

Consider a scenario where you must calculate a complex discount rate based on multiple external dependencies. In a legacy setup, the entire lookup and calculation logic would be duplicated inside every logical branch. By utilizing custom variables, you can evaluate the lookup once, assign it to a clearly named identifier, and simply reference that identifier in your final conditional logic. This ensures total computational consistency.

Implementing custom variables also provides a massive operational advantage during the debugging phase. If the final output is incorrect, you can temporarily alter your output parameter to display one of your intermediate variables instead. This allows you to inspect the exact value being generated at each individual stage of your complex logic without tearing the entire formula apart. It is a highly sophisticated, non destructive diagnostic method.

Adopting IDE-Like Environments for Spreadsheets

The most significant paradigm shift in recent spreadsheet evolution is the introduction of advanced formula environments. These specialized interfaces mimic the functionality of traditional integrated development environments utilized by professional software engineers. By providing a dedicated workspace with syntax highlighting and automatic indentation, these modern tools completely transform how data professionals interact with complex analytical logic on a daily basis.

| Debugging Element | Traditional Native Editor | Modern Integrated Environment |

|---|---|---|

| Visual Hierarchy | Flat single line text string | Indented multi line logical structure |

| Error Identification | Manual parenthesis counting | Real time continuous syntax highlighting |

| Variable Scope | Global workbook references only | Locally scoped and isolated custom variables |

| Code Documentation | Separate manual documentation sheet | Inline textual comments directly alongside logic |

These advanced environments seamlessly support inline comments and multi line structural formatting without relying on awkward keyboard shortcuts. Financial analysts can finally document their assumptions and methodologies directly alongside the relevant mathematical operations. This unprecedented level of transparency is revolutionary for team collaboration, peer review processes, and strict audit compliance. When a logical block is properly formatted and deeply annotated, it becomes a reliable component.

Transitioning to an advanced environment also intrinsically encourages a more modular approach to formula design. Instead of attempting to solve every complex business constraint within a single cell, professionals can draft independent logical modules and test them iteratively. This iterative testing process mirrors professional software development lifecycles and drastically reduces the probability of catastrophic failures occurring in live production financial models.

Advanced Architectural Strategies for Data Professionals

Elevating your spreadsheet experience requires a fundamental psychological shift regarding how you perceive analytical work. You must stop viewing formulas as simple ad hoc mathematical operations and begin treating them as miniature standalone software applications. Professional data architecture demands professional grade development workflows, rigorous testing protocols, and a commitment to scalable structural design that outlasts any individual analyst.

Step by Step Guide to Refactoring Legacy Formulas

- Extract the core calculation from the legacy cell and test it in isolation to establish a baseline output.

- Identify repeating sub calculations and define them as locally scoped custom variables at the beginning of the logical flow.

- Replace deep conditional branches with dedicated lookup arrays positioned on a secure, centralized configuration sheet.

- Integrate robust error handling wrappers around the final logic to capture unexpected external inputs gracefully.

- Reassemble the final formula utilizing modern structural formatting, consistent indentation, and comprehensive inline documentation.

The persistent reliance on manual troubleshooting methodologies is actively stunting the professional growth of entire analytical departments. When your primary mechanism for resolving issues involves staring at a microscopic text box and attempting to mentally compile complex operations, you are engaging in a flawed process. This traditional approach treats calculation errors as inevitable workplace accidents rather than highly predictable structural anomalies.

Building Defensive Formulas for Long Term Stability

A truly robust spreadsheet model anticipates external failure and handles invalid parameters gracefully. Relying on default native error outputs disrupts subsequent dependent calculations and degrades overall sheet integrity. By wrapping your core mathematical logic in advanced error handling functions, you gain total control over exactly how the application responds to invalid data inputs. This prevents a single missing parameter from cascading into systemic failure.

View common formula error codes and their architectural solutions

The #VALUE! error typically indicates a fundamental data type mismatch, often requiring strict type checking or intermediate data parsing. The #REF! error exposes brittle absolute references that have been structurally compromised by column deletion, which can be mitigated by utilizing dynamic array ranges. The #NAME? error frequently highlights misspelled function calls or unrecognized custom variables, underscoring the critical need for integrated syntax highlighting and auto-completion environments.

However, applying blanket error suppression is a highly common analytical trap that successfully masks underlying logical flaws. Using functions to return a completely blank cell when an error occurs might make the presentation look cleaner, but it destroys your ability to track missing source data. Instead, error handlers should ideally return specific, highly visible warning flags that clearly indicate the exact nature of the failure.

Integrating targeted evaluation functions into your primary logical flow allows for the creation of dynamic fallback mechanisms. If an exact data match cannot be seamlessly found in your primary database, the formula can automatically and silently redirect the search query to a secondary historical archive. This level of dynamic routing transforms static mathematical operations into intelligent, self correcting data processing pipelines.

Conclusion: Elevating Your Data Architecture Logic

By fully embracing contrarian methodologies and completely dismantling the deeply ingrained habits of traditional spreadsheet usage, you unlock unprecedented levels of analytical accuracy and model performance. The advanced techniques and variables discussed throughout this comprehensive analysis are not merely theoretical academic concepts. They are highly practical, immensely actionable structural strategies utilized daily by top tier data professionals worldwide.

Ultimately, the aggressive campaign to dismantle traditional spreadsheet troubleshooting is fundamentally about reclaiming your valuable time and mental bandwidth. Data professionals are strictly employed to analyze broad market trends, identify lucrative opportunities, and provide deep strategic insights, not to perform menial text parsing tasks. By automating syntax error detection and adopting modern visual debugging environments, you elevate your essential role from a reactive spreadsheet mechanic to a highly proactive data architect.